Benchmarking Large Riak Data Types: A Potential Fix

A potential fix

As I mentioned in my last post, Russell Brown is working on a feature branch of riak_dt which may have a fix for some of this. Today, I ran my benchmark against a locally-compiled Riak, and then I built it with the potential fix.

My environment

I’m on Mac OS X 10.9.5, and I ran the benchmark against a single Riak 2.0.2 node running locally, installed from source. I have OTP R17, and therefore had to make a few changes in the Riak build system:

- Allow 17 in the accepted versions regex

- Disable

warnings_as_errorsin a few of the dependencies as needed - Exclude rabbit_common due to conflicting modules

To include the patched version, I changed the riak_dt branch that riak_kv depends on to bug/rdb/faster-merge-orswot.

Results

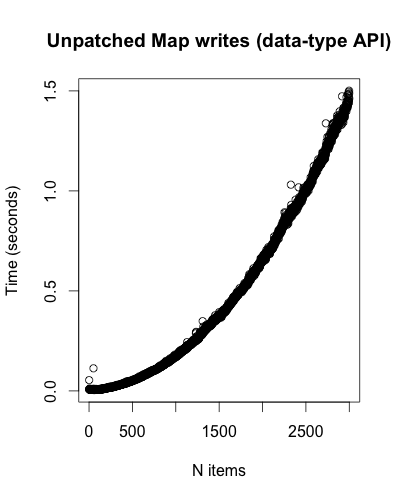

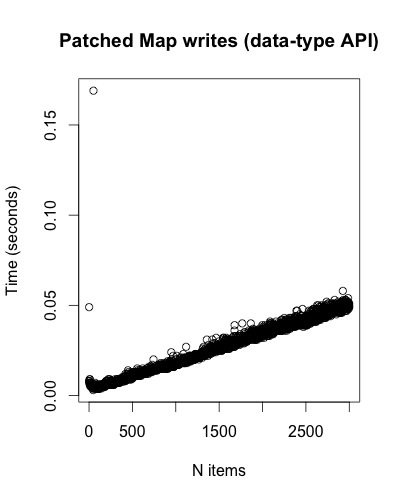

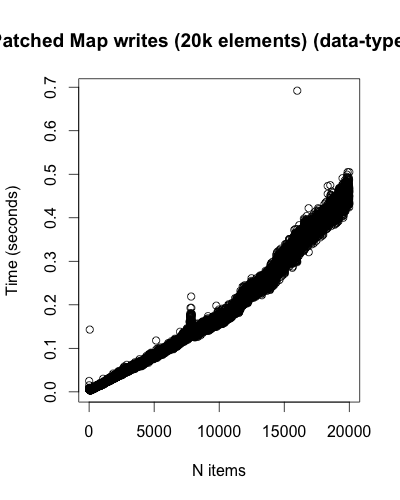

Map writes has improved significantly. Not only does it no longer seem exponential, but it is much faster! Notice the time scales:

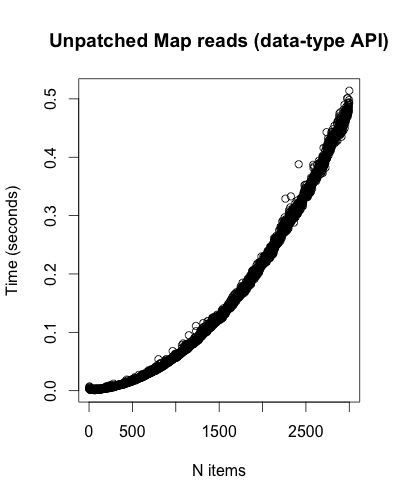

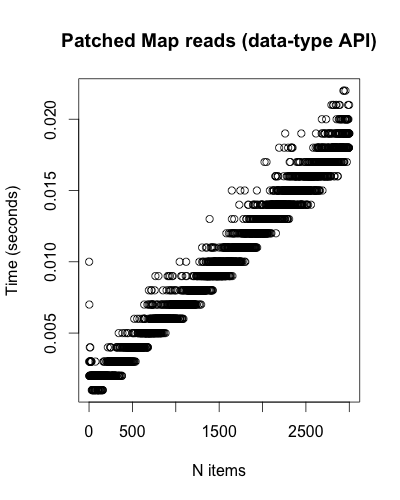

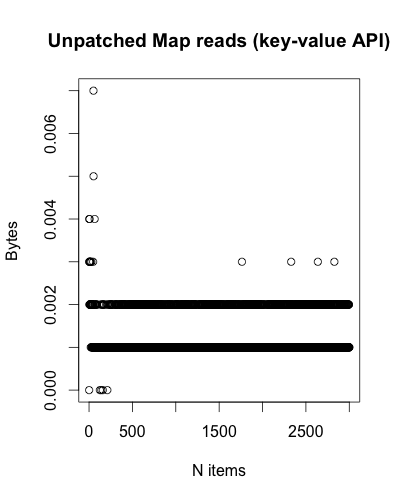

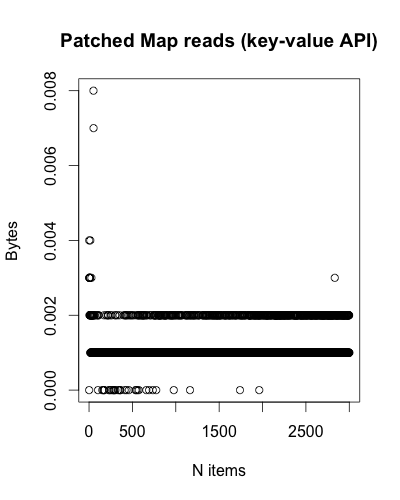

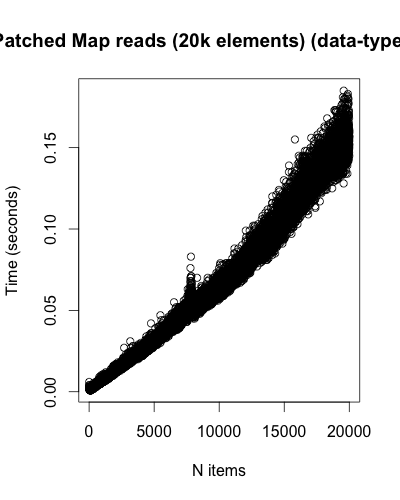

The same applies for Map reads:

The stripes in the Patched Map reads chart is likely due to the precision the benchmark uses when printing the time. They can be ignored.

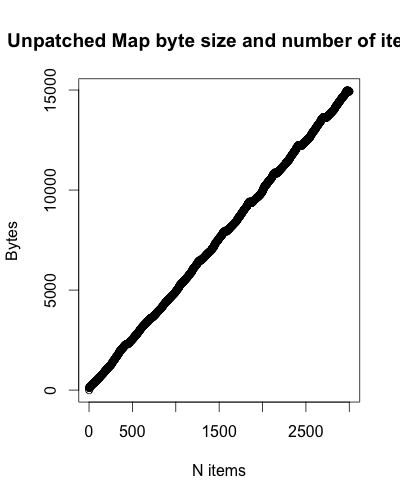

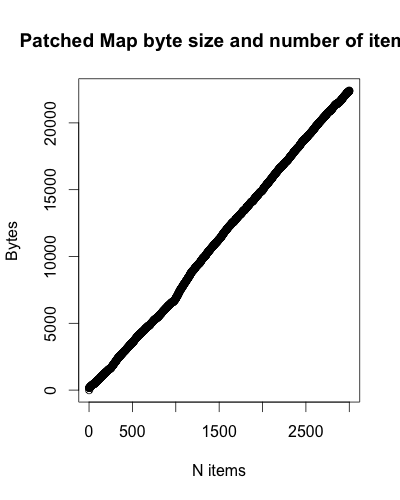

However, there was a regression (tradeoff) with regards to size. It appears that the patched version grows at a faster rate, though it is still linear.

The blip around 1,000 items may be due to the change in the size of the elements from 3 to 4 bytes.

There are no visually significant changes for Map reads using the key-value API.

20k Elements

Testing the unpatched version with 20,000 elements would be unbearable, but did test the patched version to give a better idea of how it would behave at that size. It’s not quite what I was expecting, but it is still much faster than the unpatched version with 3,000 elements.

Raw data

Future work

- Test the patched Set implementation.

- Test against an actual cluster rather than a single local node.

- Make a

basho_benchversion of the benchmark.

Once again, big thanks to Russell Brown for the quick turn-around on this! We’re looking forward to using this change once it is officially released. We are also eagerly anticipating delta-mutation, which should bring the linear growth (for writes) down to amortized constant time, if I’m understanding the change correctly.

kms

kms